Aidan Gomez

On Creating Artificial Intelligence

See: Facebook’s: “A Roadmap towards Machine Intelligence”

Recently, Tomas Mikolov, Armand Joulin and Marco Baroni of Facebook’s AI Research department released a paper titled “A Roadmap toward Machine Intelligence”; one that outlined a highly abstracted and theoretical guide on the development of artificial intelligence.

The paper proposed some novel and interesting methodologies in a notably accessible format and it’s well worth the short read.

I wish to take a look at some of the most thought-provoking points raised, as well as offer some more commentary on how we might make progress towards the lofty goal of AI.

Communication

The capacity for communicaton in intelligent machines is a necessity — and far from a unique suggestion — however, the way by which communication is generated and utilized is crucial to the methodology put forward. What is novel, is the suggestion of communication and natural language development providing a guarantee — of sorts — about the machine’s ability to learn.

The authors propose (to some degree) that the sole prerequisite of a machine capable of artificial intelligence, is the ability to learn. The machine begins training entirely naïve to its environment, purpose, and capabilities. It should be mentioned; I’m ignoring their propositions of pattern interpretation and internalization — as well as other suggestions, such as the elasticity of internal models — which I feel are redundant to discuss at present, as they are necessary prerequisites to a successful implementation of the proposed communication-based methodology.

From this base of “a machine that can learn” the authors propose that training must begin by first learning natural communication in order to interact with its teacher and the environment.

A natural learning approach

Humans are master replicators, and weak innovators; as such, most of our technological success has drawn from our ability to recognize patterns and reverse-engineer our environment. It seems only logical to conduct the training of our intelligent technology in the same manner by which we train ourselves.

After birth, a child is little more than a blob of overwhelmed carbon. It is hard coded only with the most dire necessities for life: cry and flail when hungry, cry and flail when in pain, cry and flail when alone, etc. As infants, we begin attempted interpretation of some extremely complex input — our senses. To begin with, we are pretty much useless at it. Luckily, our brains are designed to recognize and react to patterns, so even with very few tools in our belt, we are able to improve our cognition rapidly.

We aren’t born with language, we learn it from our input; the reaction of our teachers (parents) provides positive feedback, and the connection between language and the physical world provides a tool to express our desires (more on desire, later.)

This same idea applies to the suggested training methodology; give our machine the most distilled set of tools necessary for it to function and have it prove itself by learning and orienting itself towards the environment. Our machine should be able to find patterns in its input and react with an output that maximizes positive environmental response — communication!

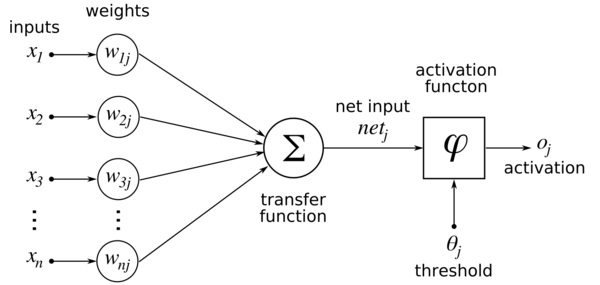

The most successful algorithms capable of learning complex patterns — to a high degree of abstraction — are without question neural networks. As the name suggests, these algorithms and models are based on our own brain.

Intelligence

Like many in and around the field of computer science, I spend countless hours in the shower pondering the definition of intelligence. What is it that makes humanity unique to all other life? One trait that seems to be most promising is our innate ability to recognize high order patterns, within abstract data. While nearly all lifeforms are capable of some pattern recognition and, in some cases, complex pattern recognition (see: Human Facial Recognition in Crows); humans seem to have a unique propensity for recognizing patterns in unnatural data — beyond the realm of our senses and into the realm of mental abstractions. I would conjecture that it is our ability to recognize patterns that spurred the development of our complex communication tool, language.

For most animals, expressing observations about patterns present in their physical surroundings suffices for nearly all communication needs. In humans, our ability to recognize abstract patterns required us to be able to communicate these purely mental constructs (independent of our senses) to others in the group. Thus, allowing for distributed brainpower in decision making, as well as faster, more complete transfer of complex ideas.

It’s difficult to imagine how a great ape would express a thought pattern such as, “the yield of these fruit-bearing trees seems to be increased if we allow more sunlight to hit its leaves” to its kin. So, regardless of a great ape’s ability to innovate, the passage of complex knowledge ends with each individual.

Training

### Assume: - we begin with a machine capable of receiving input and giving output; - it is capable of learning by reinforcement (+/– feedback) from its teacher; - it is void of any information about how to interact with its environment.

Method:

- The machine will receive input in the form of natural language instructions (i.e. “turn left”) from its teacher

- The machine will query and instruct upon the environment

- The machine will respond to the teacher (in simple cases, it will relay the actions it took within the environment)

We can see that, to begin with, the machine will simply spew random characters as a response to the teacher’s instructions – resulting in negative feedback from the teachers. Eventually, the machine will happen upon a correct random input, which will spur the education of its communication-based interaction with the teacher and environment.

So, the training method proposed by the authors begins by first teaching our machine to communicate with its teacher and environment; from here, incremental steps in complexity are taken, introducing the machine to further nuances and ambiguity in language.

The authors stress the importance of small-batch training: the ability to learn from very little exposure to phenomena. Most training regimes typically rely on large amounts of data — with uniform representation frequency for each output category. Considering the massive amounts of research and subsequent innovation, I have no doubt that new solving strategies, that more effectively deal with small batch inference, will be developed. Another point I’ll raise shortly is that of outliers, which I believe to be significant in any training regiment.

Elasticity

Another point that the authors have raised is the idea that any model capable of using their training methodology must be able to increase its own internal complexity, dynamically, to scale as problems become more complex. This is something that I am in complete agreement with. I have always found that NNs reliance on a programmer to decide upon the complexity of its parameter space seems wholly unnecessary. A massive step for machine learning will be creating models that scale their parameters and complexity elastically; expanding to incorporate recurring outliers that may represent an unconsidered feature or category, contracting to eliminate redundancies.

Methods for network reduction already exist (see: neural network reduction), and I have no doubt that in the next couple of years we will see training regimes that stack the use of multiple networks (as in the cited paper) to manage complexity.

Modularity

This leads into how we presently apply neural networks; we use neural networks disjointly to interpret particular types of data. Only very recently in the field of deep learning (for instance, Google’s Inception network) have we been joining sub-networks together into a larger net to solve highly complex problems. I think this is an incredibly intuitive ‘next step’: we have a neuron which can solve extremely simple problems — inform when input is above a threshold — then, we combine these into simple networks to solve more complex problems. The next logical step is to combine nets into a ‘super-network’ that uses sub-networks for sub-problems and then the overarching network to extract the super-conclusion from the sub-networks’ sub-conclusions.

Humans use a similar decision making system: we leverage information from all of our inputs (senses) to draw conclusions about our environment and how we should react it. Neural networks can now interpret images, sounds and more abstract data (see: language modelling), the logical next step is combining these modular components to form a larger system.

I am convinced that the combination of modularity and elastic scaling will provide us with the best chance of creating effective decision making machines. It’s only to be expected that a network — when presented with enough outlier data of a similar form — will allocate a new sub-network to try and interpret this new phenomena.

Memory

Another key tool to human learning is our memories. We store a vast amount of information in highly distilled forms from a lifetime of experiences. Recent information is held in a highly detailed and uncompressed format, while older information (that has been thoroughly processed) can be stored in a more obscure, reduced format. Oddities or outliers are ‘embossed’ in our minds (see: Bayesian Surprise Attracts Human Attention); they are easy to find and often when our minds wander, we wander to these events. It’s as if our minds dedicate idle processing power to drawing conclusions about events that we have trouble categorizing.

When we experience new information, we tend to look back into our past experiences to see if it can draw any more insight from them; this way, we can draw out potential value from this new information, via connections with experiences we are familiar with. Neural networks in their present state, are largely naïve to their past experience. They extract consistent and generalized features, while ignoring nuances and discarding unique instances. Perhaps as networks become more complex and varied, we will develop a method of storing and grouping previous input that is particularly unique. The net may then have a training step that looks at these unique instances and tries to determine whether a new category (elasticity) or perhaps a new network (modularity) could be used to extract value from these curious points of data.

Curiosity

This is something that I’ve never considered when thinking about what properties should make up AI, and it is an enormous oversight on my part. Curiosity seems so intimately linked to human development, that to have it anywhere but at the forefront of our minds when creating intelligent machines could severely limit how quickly we solve the problem.

I would define curiosity as the pursuit of (better: desire for) previously unexperienced information. This plays directly into the presented notions of a machine that effectively scales to include outliers and new data. The authors suggest that the machine be given ‘free time’ to explore its environment and apply the skills it has been developing in training, as well as learn new ones. It’s this idea of curiosity that will spark the machine intelligence; it’s purpose should be to learn as much about the world as possible, and it should achieve this through experience.

Conclusion

I think that the ideas presented in Facebook’s paper are both interesting and easily consumed; allowing for collective brainstorming across areas of expertise.

As we learn more about our own neurological learning processes (see: Science’s ‘Holes’ in neuron net store long-term memories), the more effective our computational models will become.

Our success on this topic is entirely dependant on the collaboration of two fairly distant fields: Biology and Computer Science (Man and Machine?).

A good question for humanity to start asking itself: if we are capable of creating a curious, virtuous, and sustainable form of life via intelligent machines; is the fear of this machine becoming our successor well founded or necessary?

If you enjoyed the article please recommend, and feel free to follow my publications!